Source: AFP

The 2024 race for the White House faces the prospect of an AI-enabled firestorm of disinformation, with a robocall impersonating US President Joe Biden already causing particular alarm over audio hoaxes.

“What a bunch of scumbags,” the phone message said, digitally spoofing Biden’s voice and echoing one of his signature catchphrases.

The impromptu call urged New Hampshire residents not to vote in last month’s Democratic primary, prompting state authorities to launch an investigation into possible voter suppression.

It also prompted calls from campaigners for tighter guardrails around AI-powered tools or a complete ban on robocalls.

Disinformation researchers fear rampant abuse of AI-powered apps in a pivotal election year thanks to proliferating voice-cloning tools that are cheap and easy to use and hard to detect.

“This is definitely the tip of the iceberg,” Vijay Balasubramaniyan, CEO and co-founder of cybersecurity firm Pindrop, told AFP.

Read also

Khan’s party blackouts Pakistan to keep the campaign alive

“We can expect to see many more deepfakes this election cycle.”

A detailed analysis published by Pindrop said a text-to-speech system developed by AI voice-cloning startup ElevenLabs was used to create the Biden robocall.

The scandal comes as campaigners on both sides of the US political aisle harness advanced artificial intelligence tools for effective campaign messaging and as tech investors pump millions of dollars into voice-cloning startups.

Balasubramaniyan declined to say whether Pindrop had shared its findings with ElevenLabs, which last month announced a funding round from investors that Bloomberg News reported valued the company at $1.1 billion.

ElevenLabs did not respond to AFP’s repeated requests for comment. Its website directs users to a free text-to-speech generator to “create natural AI voices instantly in any language.”

Read also

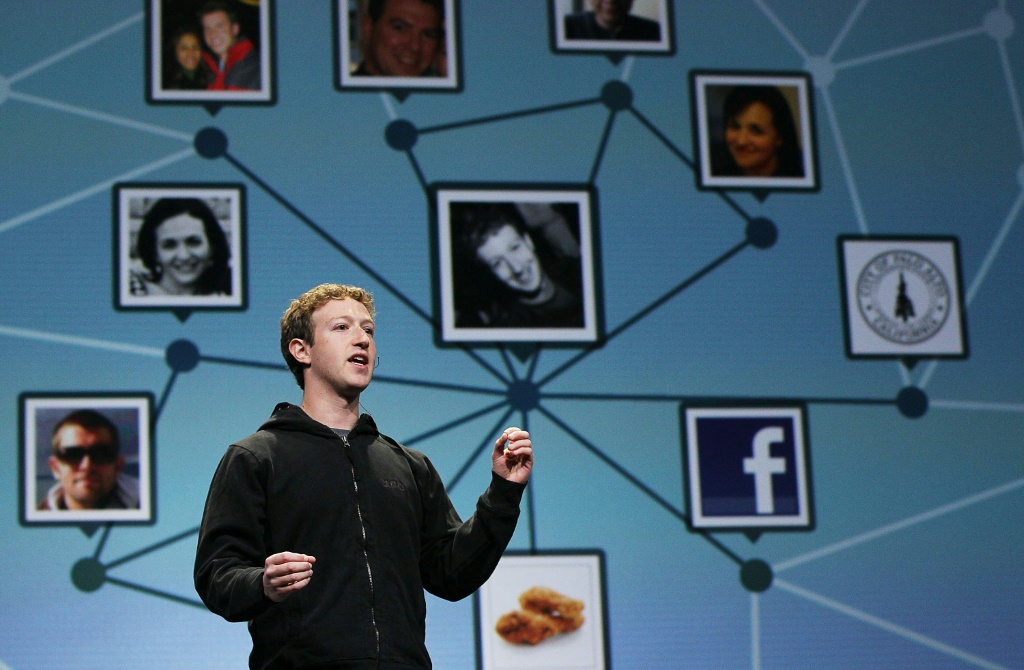

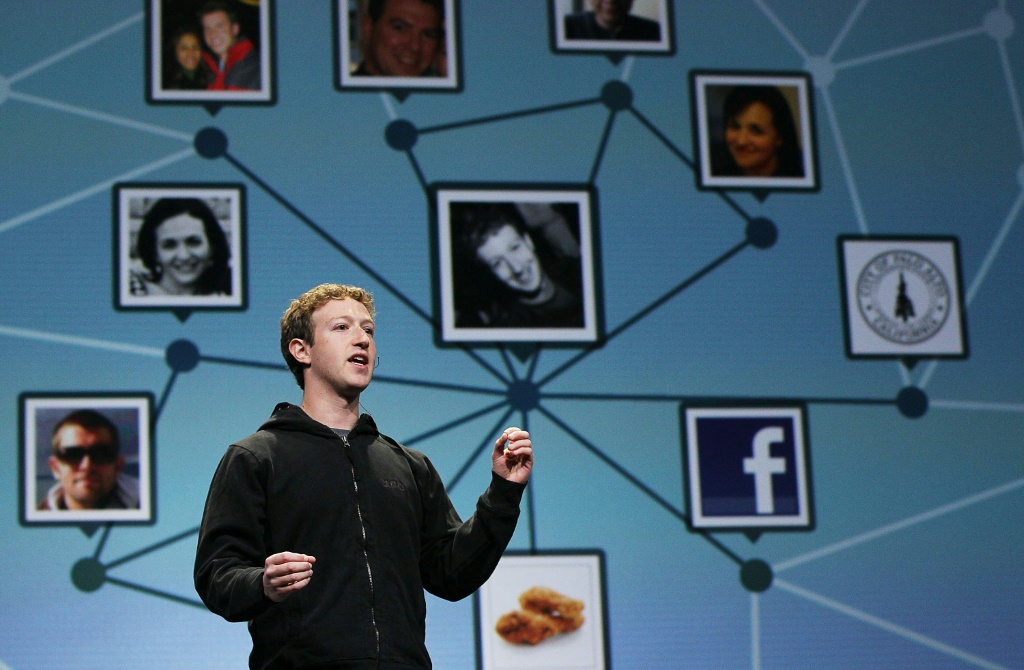

Facebook, the old timer social network, turns 20 years old

Under its safety guidelines, the company said users are allowed to create voice clones of politicians such as Donald Trump without their permission if they “express humor or mockery” in a way that makes it “clear to the listener that what listens to is a parody and not authentic content.”

“Electoral chaos”

US regulators are considering making AI-generated robocalls illegal, with the fake Biden call adding new impetus to the effort.

“The political profoundly false moment is here,” said Robert Weissman, president of the advocacy group Public Citizen.

“Policymakers must hurry to implement safeguards or we face electoral chaos. The New Hampshire deepfake is a reminder of the many ways deepfakes can sow confusion.”

Researchers worry about the impact of artificial intelligence tools that create video and text so seemingly real that voters have trouble deciphering truth from fiction, undermining trust in the electoral process.

Read also

Tech CEOs take on US Senate for youth content

But deep-phone fakes used to impersonate or defame celebrities and politicians around the world have caused the most concern.

“Of all the surfaces — video, image, audio — that AI can use to suppress voters, audio is the most vulnerable,” Tim Harper, senior policy analyst at the Center for Democracy and Technology.

“It’s easy to clone a voice using AI and hard to recognize.”

“Electoral Integrity”

The ease of creating and spreading false audio content complicates an already hyper-polarized political landscape, undermining trust in the media and enabling anyone to claim ‘fabricated evidence’ based on facts,” Wasim Khaled, managing director, told AFP. by Blackbird.AI.

Such concerns are heightened as the proliferation of audio AI tools outpaces detection software.

China’s ByteDance, owner of the hugely popular TikTok platform, recently introduced StreamVoice, an artificial intelligence tool to transform a user’s voice in real time into any desired alternative.

Read also

‘Taylor Swift’ searches blocked on X after AI porn outrage

“Although the attackers used ElevenLabs this time, it is likely to be a different AI system in future attacks,” Balasubramaniyan said.

“It is imperative that there are enough safeguards available on these tools.”

Balasubramaniyan and other researchers recommended creating audio watermarks or digital signatures on tools as a possible safeguard as well as a setting that makes them available only to authenticated users.

“Even with these actions, detecting when these tools are being used to create harmful content that violates your terms of service is very difficult and very expensive,” Harper said.

“(It) requires an investment in trust and security and a commitment to risk-focused building electoral integrity.”

Source: AFP