Meta has unveiled a series of new security features designed to protect users, especially young people, from “blackmail” and intimate image abuse.

The social media giant – which owns Facebook, Instagram and WhatsApp – has confirmed that it will start testing a nudity filter in Direct Messages (DMs) on Instagram.

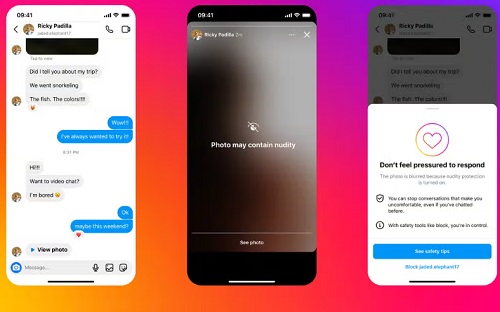

This feature, called Nudity Protection, will be enabled by default for people under 18 and will automatically blur images sent to users that are detected to contain nudity, better protecting users from seeing unwanted nudity on DM them.

When downloading nude images, users will also see a message urging them not to feel pressured to reply, and an option to block the sender and report the conversation.

With the filter enabled, people who send images containing nudity will also see a message reminding them to be careful when sending sensitive photos and will be given the opportunity to unsend those photos.

The tool uses on-device machine learning to analyze whether an image contains nudity, meaning it will work inside encrypted end-to-end chats, and Meta said it will only see any images if the user chooses to report them to the company.

“Financial extortion is a heinous crime,” Meta said in a blog post about the updates.

“We’ve spent years working closely with experts, including those experienced in fighting these crimes, to understand the tactics fraudsters use to find and blackmail victims online so we can develop effective ways to stop them .

“Today, we’re sharing an overview of our latest work to tackle these crimes. This includes new tools we’re testing to help protect people from diversions and other forms of intimate image abuse, and make it as difficult as possible for scammers to find potential targets – in Meta’s apps and online.

“We are also trialling new measures to support young people to recognize and protect themselves from extortion scams.”

Elsewhere, the social media giant said it is testing new detection technology to help identify accounts that may be involved in sex-buying scams and limit their ability to engage with everyone, but especially younger users.

Meta said message requests from these suspicious accounts will be routed directly to a user’s hidden requests folder.

For younger users, suspicious accounts will no longer see the “Message” button on a teen’s profile, even if they’re already logged in, and the company has been trying to hide younger users from those accounts on people’s follower lists to makes it harder to find them.

Meta added that it is also testing new pop-up messages for people who may have interacted with such accounts – directing them to support and help if they need it.

In addition, the company said it is expanding its work with other platforms to share details about accounts and behaviors that violate child safety policies as part of the Lantern program created last year.

“This industry collaboration is critical because predators are not limited to a single platform – and neither are extortionists,” Meta said.

“These criminals target victims across the various apps they use, often moving their conversations from one app to another.

“That’s why we’ve started sharing more messages on Lantern specifically about sextortion, to build on this important partnership and try to stop redemption scams not just on individual platforms, but across the Internet.”

Lantern is a program that runs between different technology companies and shares information about suspicious accounts.